Abstract

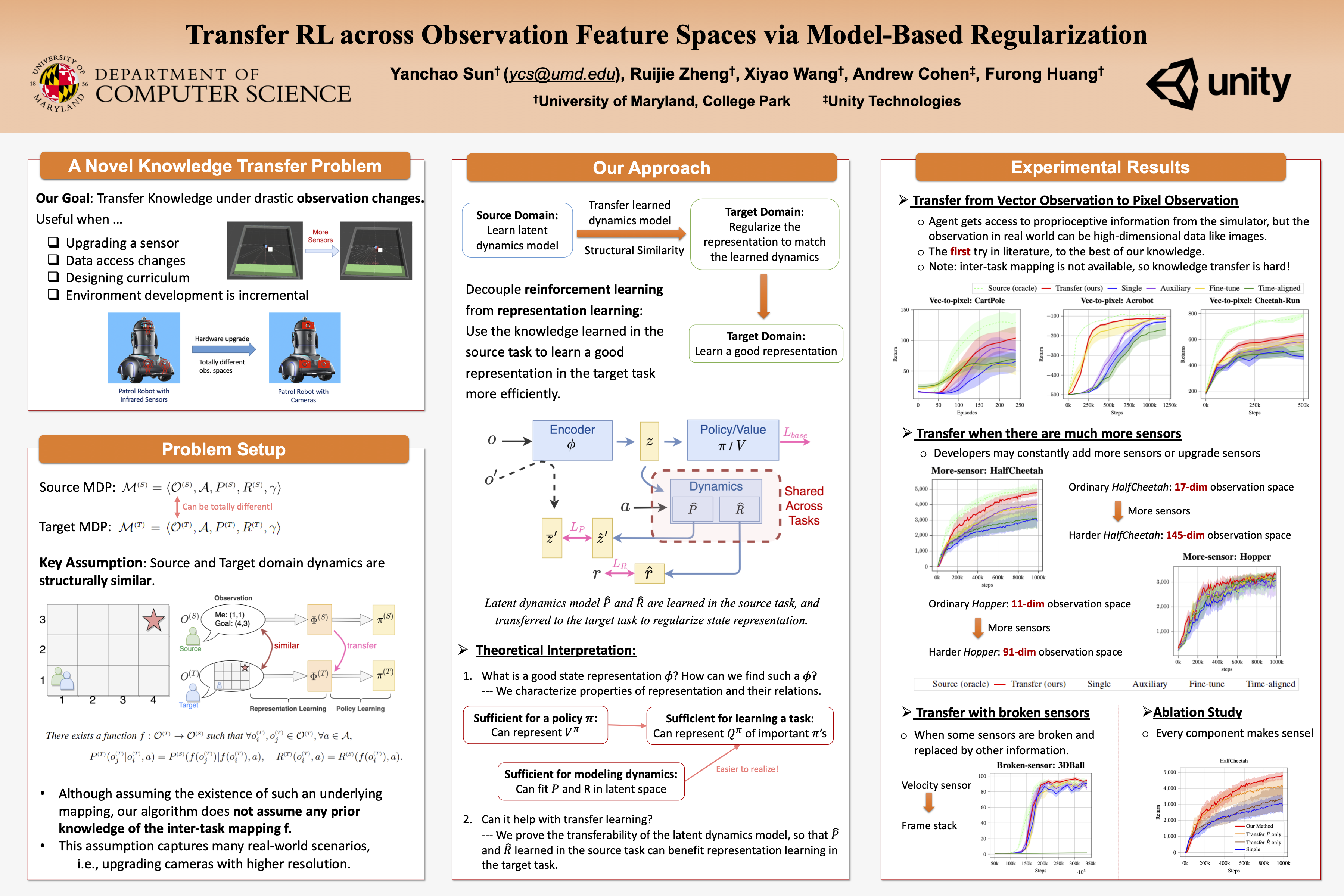

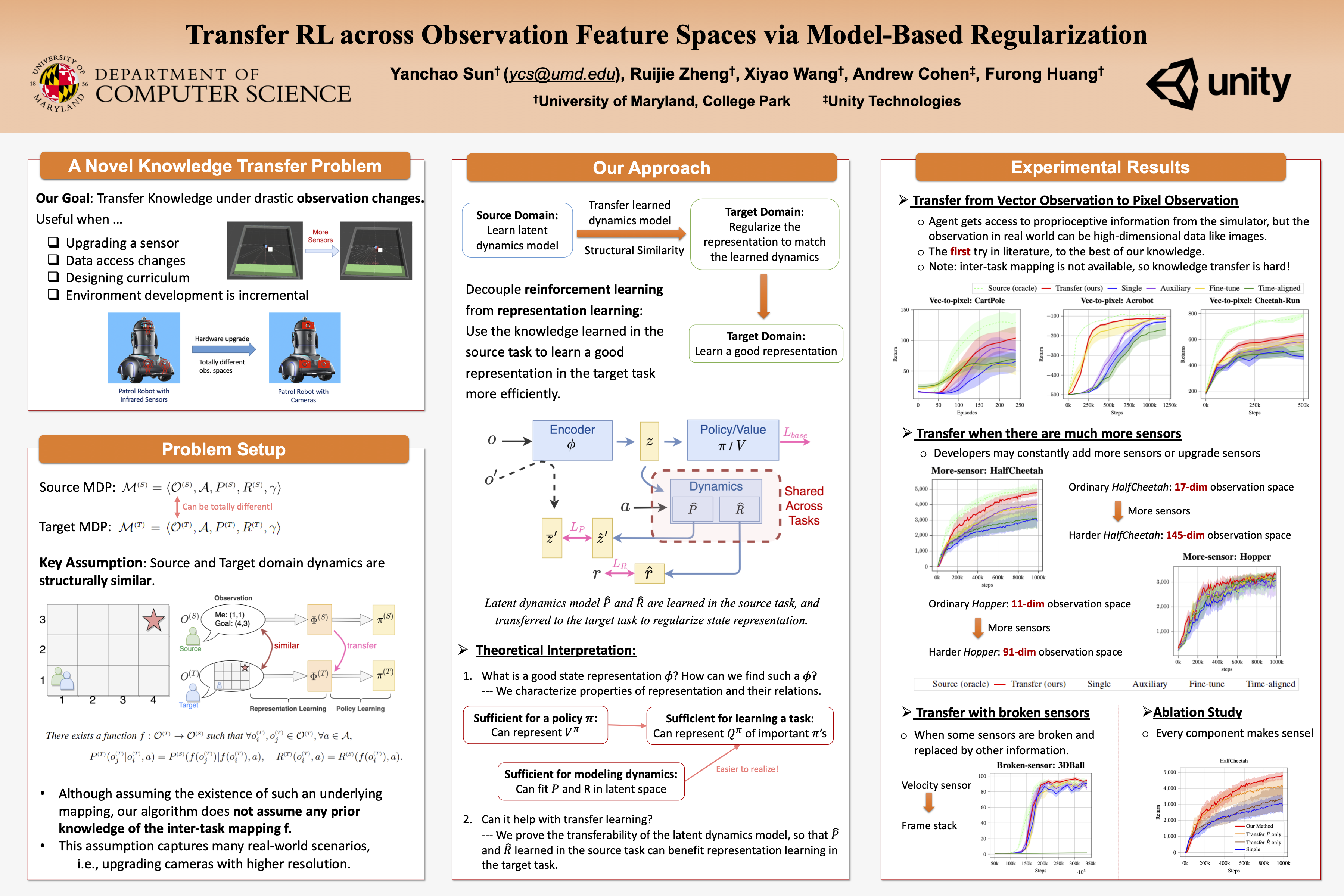

In many reinforcement learning (RL) applications, the observation space is specified by human developers and restricted by physical realizations, and may thus be subject to dramatic changes over time (e.g. increased number of observable features). However, when the observation space changes, the previous policy will likely fail due to the mismatch of input features, and another policy must be trained from scratch, which is inefficient in terms of computation and sample complexity. Following theoretical insights, we propose a novel algorithm which extracts the latent-space dynamics in the source task, and transfers the dynamics model to the target task to use as a model-based regularizer. Our algorithm works for drastic changes of observation space (e.g. from vector-based observation to image-based observation), without any inter-task mapping or any prior knowledge of the target task. Empirical results show that our algorithm significantly improves the efficiency and stability of learning in the target task.

Cite This Paper

@inproceedings{

sun2022transfer,

title={Transfer {RL} across Observation Feature Spaces via Model-Based Regularization},

author={Yanchao Sun and Ruijie Zheng and Xiyao Wang and Andrew E Cohen and Furong Huang},

booktitle={International Conference on Learning Representations},

year={2022},

url={https://openreview.net/forum?id=7KdAoOsI81C}

}